Your Multimodal Models: The VRAM Requirements You Can't Ignore

Sentence Transformers has made multimodal models available. Learn the VRAM requirements for Qwen3-VL-2B and 8B variants to manage your infrastructure effectively.

Editorial Note

Reviewed and analysis by ScoRpii Tech Editorial Team.

In this article

Understanding Multimodal Embeddings

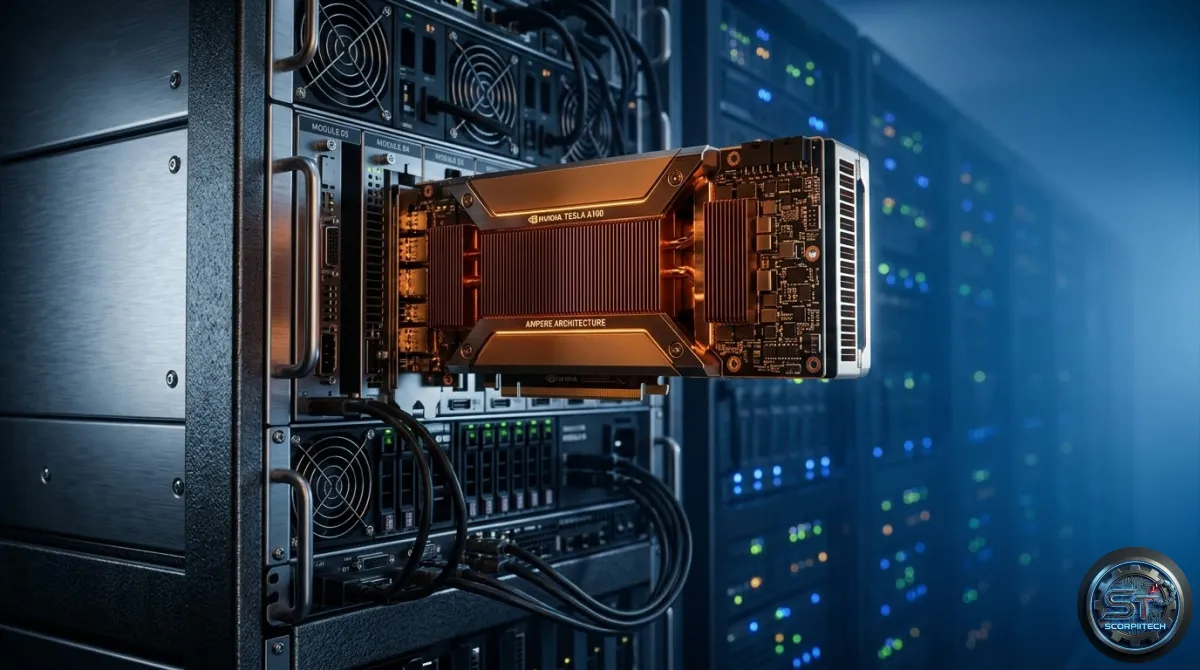

When you deploy multimodal embedding models, you're enabling applications to comprehend and correlate information across different data types, such as text, images, or audio. This has significant infrastructure and business implications, as it requires substantial hardware resources to ensure operational stability and performance. For instance, deploying the base Qwen3-VL-2B model demands a GPU equipped with at least 8 GB of VRAM.

The process of creating these models involves transforming inputs from distinct modalities into numerical vectors within a unified, shared embedding space. This transformation allows for direct mathematical comparison between representations of different types. If two inputs, regardless of their original modality, are semantically similar, their respective embedding vectors will be close to each other in this shared space.

The Mechanics of Multimodal Relevance Scoring

As you refine your multimodal models, you can leverage multimodal reranker models to further refine relevance scoring for mixed-modality pairs. This means you can not only find similar items across modalities but also accurately rank the most relevant text-image pairs based on a more nuanced understanding. The process takes inputs, such as an image and a textual query, and outputs a score indicating their semantic alignment.

To achieve this, you can use models like Qwen3-VL-2B, which offer a range of features, including:

- Support for multiple data types, including text, images, and audio

- Ability to refine relevance scoring for mixed-modality pairs

- Scalability to handle large volumes of data

Infrastructure Demands and Your Operations

Implementing these multimodal models carries substantial hardware implications for your infrastructure. For scenarios involving larger 8B variants of these models, your VRAM requirement escalates significantly, demanding approximately 20 GB per GPU. This hardware prerequisite directly impacts your deployment strategy, whether you're leveraging cloud platforms or managing on-premise GPU clusters.

To ensure smooth operations, you should consider the following:

- Assess your existing GPU compute resources against the stated VRAM requirements

- Plan for scalability to handle increasing volumes of data

- Monitor performance and adjust your infrastructure as needed

What This Means For You

The availability of these multimodal embedding and reranker models provides you with powerful tools for building more sophisticated AI applications. You can now develop systems that truly understand and correlate information across different data types, from semantic search for images using text queries to enhanced content recommendation engines.

The Bottom Line for Developers

As you develop and deploy multimodal models, it's essential to consider the infrastructure demands and operational implications. By carefully planning and provisioning your hardware resources, you can ensure smooth operations and unlock the full potential of these powerful models.

Originally reported by

Hugging Face BlogWhat did you think?

Stay Updated

Get the latest tech news delivered to your reader.