Your Content Pipeline Just Reported FAILED_EXTRACTION: What It Really Means

When your automated content ingestion reports FAILED_EXTRACTION, it signals a critical upstream data...

17 articles found

When your automated content ingestion reports FAILED_EXTRACTION, it signals a critical upstream data...

Discover why using your laptop on soft surfaces like a bed or couch can cause serious overheating an...

When migrating vLLM from V0 to V1, prioritize backend correctness. Learn why issues in processed rol...

No new facts from OpenAI Research mean your engineering roadmap isn't changing today. Understand how...

Discover EMO, a new mixture-of-experts (MoE) model pretrained end-to-end for emergent modularity. Un...

If you're operating LLM inference, you're likely bottlenecked. Discover how Shepherd Model Gateway's...

Discover essential tips for PC cleaning and maintenance to boost performance and extend the life of...

Google Cloud just launched two new AI chips, aiming to compete with Nvidia. Discover what this innov...

Meta directly addresses wasted compute cycles in AI training by optimizing Effective Training Time (...

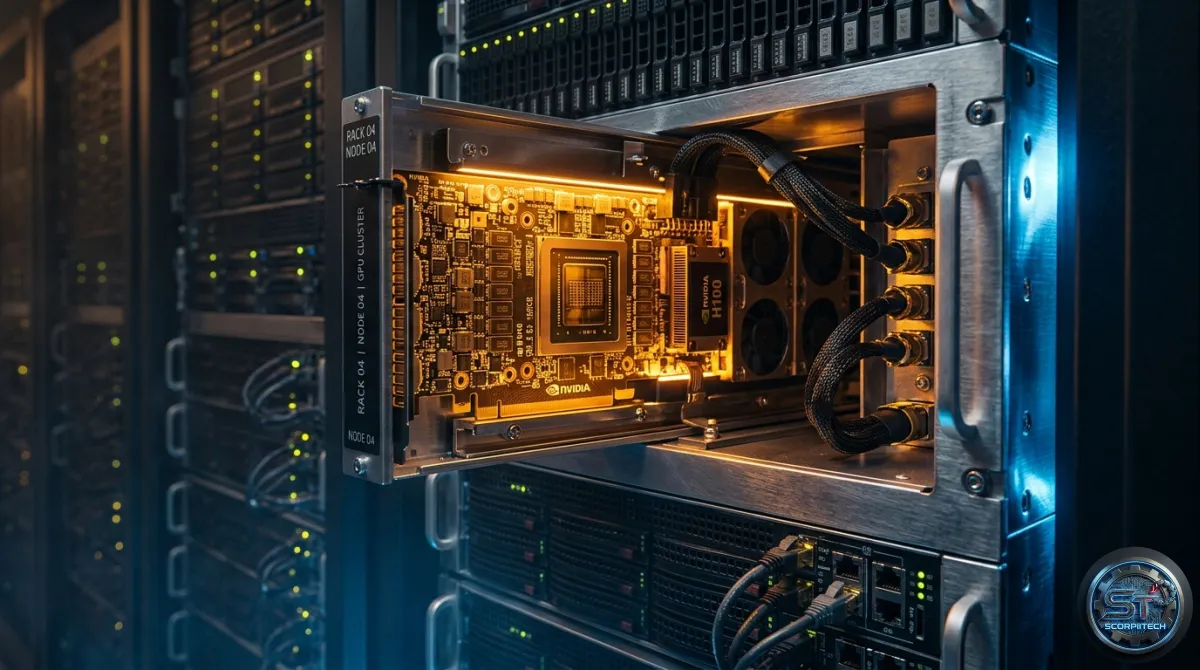

Nvidia's warranty claims for GPUs jumped 1,000% from 2024 to 2025, raising questions. Discover what...

Sentence Transformers has made multimodal models available. Learn the VRAM requirements for Qwen3-VL...

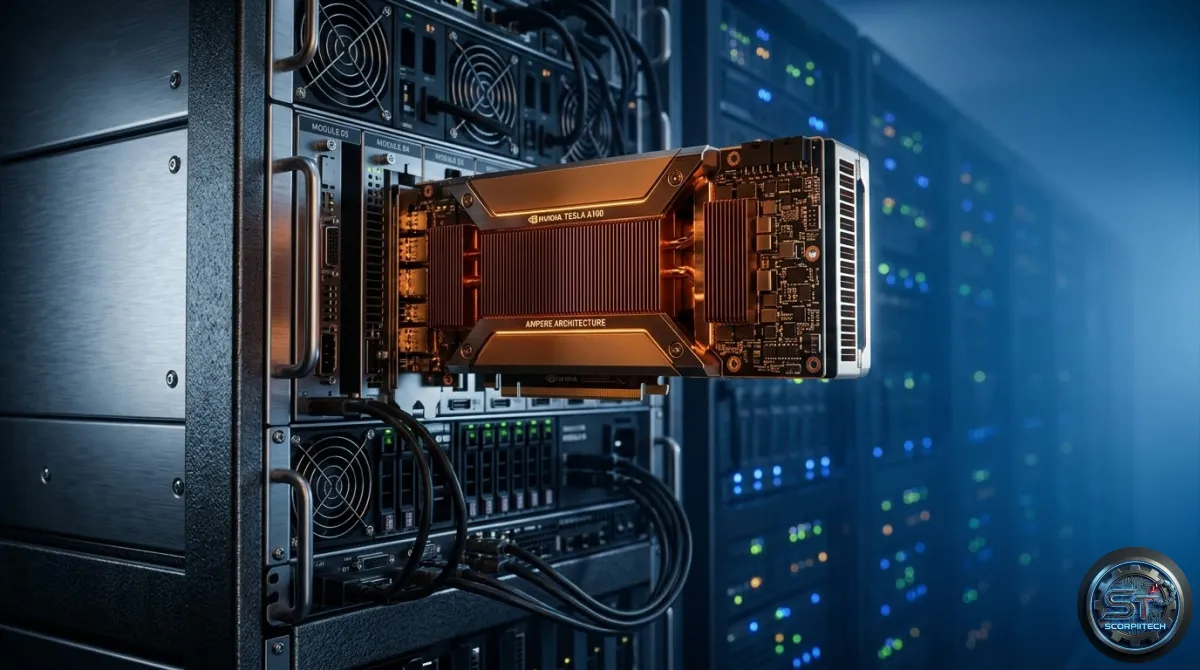

NVIDIA's Blackwell B200 leverages MXFP8 and NVFP4 to accelerate your diffusion models. Understand th...