Your Container Scaling Might Be Throttled by Your CPU Architecture

Discover how Netflix solved 30-second mount table timeouts by analyzing VFS lock contention and NUMA architecture on AWS metal instances.

Editorial Note

Reviewed and analysis by ScoRpii Tech Editorial Team.

In this article

- Container Launch Times Can Stall Your Systems

- The Mount Operation Problem

- NUMA Architecture and Interconnect Latency

- OverlayFS Overhead

- Microarchitectural Bottlenecks: Intel TMA Analysis

- Hardware Architecture Matters: Mesh vs. Chiplet

- Software Mitigations and Containerd Optimization

- Infrastructure Impact

- The Bottom Line for Developers

Container Launch Times Can Stall Your Systems

You rely on rapid container scaling to meet application demand, but high-density deployments can quickly expose fundamental limitations in your infrastructure. Netflix discovered that scaling container workloads with Kubelet and containerd introduces a linear relationship between container count, image layer depth, and mount operations. This can lead to system stalls, with health checks timing out for up to 30 seconds, directly impacting service availability.

The Mount Operation Problem

The core issue stems from the sheer volume of mount operations required when launching numerous containers with layered images. For example, launching 100 containers, each with 50 image layers, necessitates approximately 20,200 mount operations (calculated as 100 * 2 * (1 + 50 + 50)). These operations place significant strain on the Linux kernel’s Virtual Filesystem (VFS) and its associated locks.

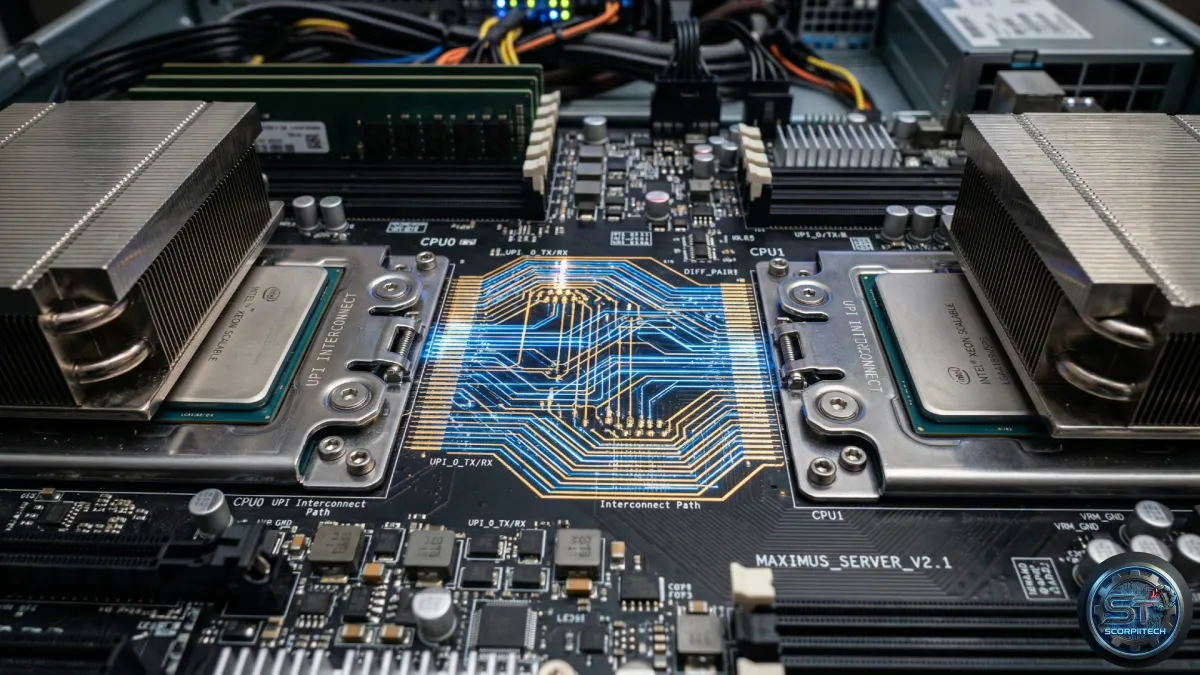

NUMA Architecture and Interconnect Latency

Non-Uniform Memory Access (NUMA) architecture, common in modern cloud environments, introduces performance complexities. NUMA systems divide memory access into local and remote regions, with remote access incurring higher latency. When your container runtime performs thousands of global lock operations, the interconnect latency between NUMA nodes becomes a primary performance bottleneck. If your processes aren’t NUMA-aware, they can experience significant delays waiting for data to cross socket boundaries.

OverlayFS Overhead

OverlayFS, a union mount filesystem used by container runtimes like Docker and containerd, combines multiple image layers into a single virtual filesystem. While efficient, each layer adds complexity to the mount table. The kernel treats each layer as a distinct mount point, requiring traversal and tracking for file path resolution. Hundreds of containers simultaneously mounting multi-layered images compete for VFS locks, leading to the contention observed by Netflix.

Microarchitectural Bottlenecks: Intel TMA Analysis

Netflix’s investigation, utilizing Intel’s Topdown Microarchitecture Analysis (TMA), revealed several microarchitectural stalls on affected nodes. These included Frontend Bound stalls due to L1D_MISS_PIPE_PROB, Bad Speculation due to BACLEAR, and Backend Bound stalls across L1, L2, L3, and general memory boundaries. This indicates the CPU spends excessive time managing cache coherency and branch mispredictions, rather than executing your code.

Hardware Architecture Matters: Mesh vs. Chiplet

The physical CPU architecture significantly impacts performance. Netflix compared AWS instance types:

- m7i.metal-24xl: 7th generation Intel, single-socket, single NUMA domain, centralized mesh-style cache architecture with a “Table of Requests” (TOR) queue.

- m7a.24xlarge: 7th generation AMD, single-socket, single NUMA domain, distributed chiplet-based architecture.

Even with Hyperthreading (HT) disabled on the m7i, the m7a outperformed it by approximately 20% in high-contention scenarios. The distributed cache architecture of the m7a proved more resilient to VFS lock contention than the centralized mesh interconnect on the m7i.

Software Mitigations and Containerd Optimization

Netflix evaluated several mitigation paths. While newer kernel APIs like fsopen(), fsconfig(), and fsmount() offer potential improvements, they require a modern kernel. Instead, they implemented an upstream containerd optimization that minimizes per-layer mount operations, reducing the load on the VFS lock. This optimization involved mapping the common parent directory of all layers, reducing the number of mount operations from O(n) to O(1) per container, where n is the number of layers in the image.

Infrastructure Impact

This investigation highlights the critical interplay between software and hardware when scaling containerized applications. You can no longer treat underlying hardware as a commodity. The choice between a 2-NUMA node system and a single-node system can determine whether your deployment succeeds or stalls. If you cannot reduce image layer counts, you must either optimize your container runtime to batch mount operations or select CPU architectures designed to handle high-concurrency cache coherency with lower latency.

The Bottom Line for Developers

Container scaling isn’t just about code; it’s about understanding the underlying hardware. Monitor your container launch times and mount table lengths. Profile your applications to identify VFS lock contention. Consider the NUMA architecture and cache characteristics of your chosen instance types. Prioritize optimizing your container images and runtime configurations to minimize mount operations. Finally, remember that a seemingly small change in hardware can have a significant impact on your application’s performance and stability.

Originally reported by

Netflix Tech Blog (ML)What did you think?

Stay Updated

Get the latest tech news delivered to your reader.