Stop Guessing Why Your SRE Agents Fail: IBM and UC Berkeley’s MAST Taxonomy

Stop blind prompting. Learn how IBM and UC Berkeley use MAST and IT-Bench to diagnose fatal failures in your Kubernetes and SRE agents.

Editorial Note

Reviewed and analysis by ScoRpii Tech Editorial Team.

In this article

- Agentic Failures Now Account for 86% of IT Automation Runs

- Understanding the Multi-Agent System Failure Taxonomy (MAST)

- Kubernetes: A Complex Environment for Agentic Systems

- Surgical Failures: The Gemini-3-Flash Profile

- Cascading State Collapse: The GPT-OSS-120B Challenge

- Kimi-K2: The Overthinking Model

- What This Means For You

- Infrastructure Impact

Agentic Failures Now Account for 86% of IT Automation Runs

You are likely experiencing a critical bottleneck in your IT automation efforts: agentic failure. Despite advancements in large language models (LLMs), benchmark data from IT-Bench reveals that only 14% of agent-driven IT workflow runs complete successfully. This leaves you with limited insight into the root causes of the remaining 86% of failures, hindering optimization and eroding trust in automated systems. Recent research from IBM Research and UC Berkeley introduces the Multi-Agent System Failure Taxonomy (MAST) to address this visibility gap.

Understanding the Multi-Agent System Failure Taxonomy (MAST)

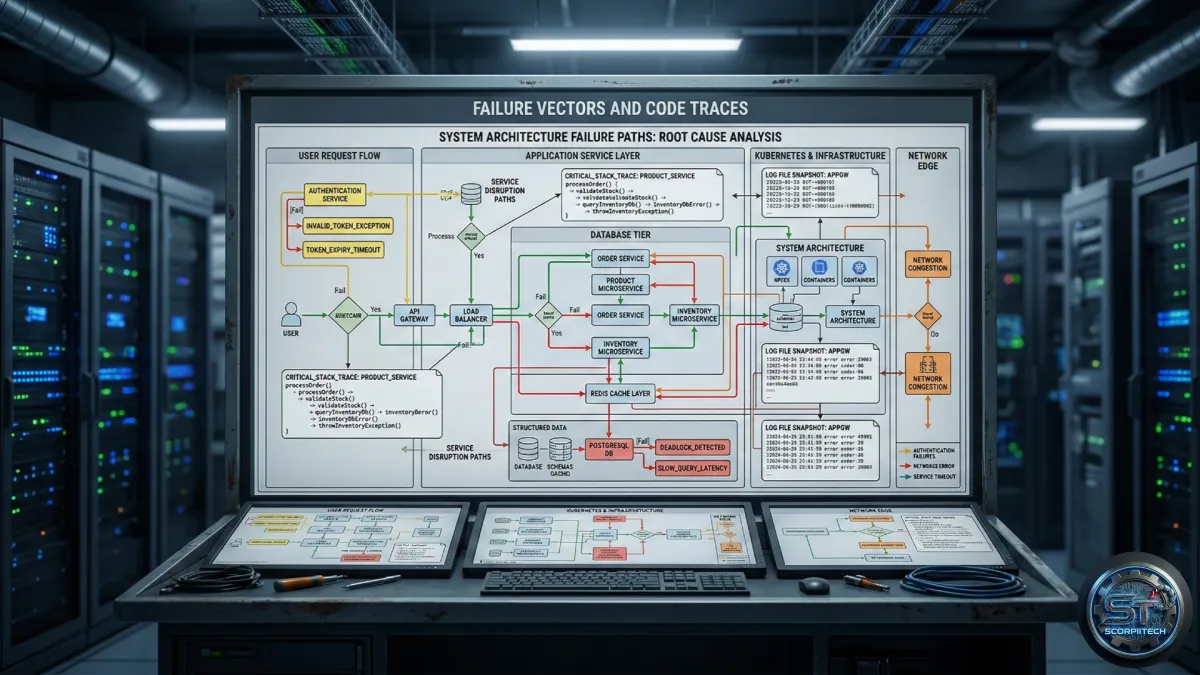

MAST provides a structured framework for analyzing agentic failures, moving beyond simple pass/fail metrics. The taxonomy identifies 14 distinct failure patterns categorized into three core areas. This allows you to pinpoint the specific mechanics behind failures, rather than simply acknowledging their existence. Researchers applied MAST to 310 traces from IT-Bench SRE tasks – diagnosing Kubernetes outages, patching vulnerabilities, and managing cloud costs – using Gemini-3-Flash, Kimi-K2, and GPT-OSS-120B.

Kubernetes: A Complex Environment for Agentic Systems

Kubernetes, the dominant container orchestration platform, presents unique challenges for agent-based automation. You rely on Kubernetes to automate the deployment, scaling, and management of containerized applications. Successful automation requires agents to maintain an accurate, real-time understanding of the cluster’s topology and health. Long-horizon tool loops are essential for querying logs, analyzing metrics, and executing corrective actions. The IBM and UC Berkeley study identified that failures in maintaining this internal state lead to either “cascading collapse” or “surgical failures.”

Surgical Failures: The Gemini-3-Flash Profile

The study characterized Gemini-3-Flash as exhibiting a “surgical” failure profile. This model demonstrates strong internal coherence but often fails due to isolated errors, particularly FM-3.3 (Incorrect Verification). Analysis of Gemini-3-Flash traces showed a 52% higher failure rate due to this specific error compared to successful runs. Gemini-3-Flash frequently assumes success without sufficient verification, prematurely terminating tasks before confirming results against ground truth.

To mitigate this, you should implement an external verification gate. This gate requires tool-based evidence of success before allowing the agent to conclude a task. This forces the model to validate its actions, reducing instances of FM-3.3.

Cascading State Collapse: The GPT-OSS-120B Challenge

Open-source models like GPT-OSS-120B tend to exhibit “cascading collapse.” These models averaged 5.3 distinct failure modes per failed trace, indicating a fundamental inability to maintain internal state. Errors accumulate over time, leading to complete derailment of the automation process. This contrasts sharply with Gemini-3-Flash’s more focused failures.

Kimi-K2: The Overthinking Model

Kimi-K2 occupies an intermediate position, characterized as an “overthinking” model. It generates lengthy reasoning chains that frequently fail during execution. Researchers found that 92% of Kimi-K2 failures were attributed to FM-2.6 (Action-Reasoning Mismatch), where the model’s intended logic doesn’t align with the actual tool call executed. You can address this by focusing on aligning the reasoning output with the tool-calling schema.

What This Means For You

You must shift your focus from overall success rates to granular failure analysis. MAST provides the vocabulary and structure to do so. Generating structured failure vectors from your execution logs allows you to identify whether your bottlenecks stem from structural friction (minor flaws like repetition) or decisive fatal flaws.

Here’s a breakdown of actionable steps based on the MAST taxonomy:

- FM-3.1 & FM-1.5 (Termination Confusion): Refine your prompting or fine-tuning to define clear exit conditions.

- Gemini-3-Flash: Prioritize implementing external verification steps.

- Kimi-K2: Focus on aligning reasoning output with the tool-calling schema to reduce the 92% action-reasoning mismatch rate.

- GPT-OSS-120B: Investigate methods to improve state management and reduce error accumulation.

Infrastructure Impact

The adoption of MAST isn’t merely an academic exercise. It directly impacts your infrastructure costs and operational efficiency. By identifying and addressing specific failure modes, you can reduce the number of failed automation runs, minimizing wasted compute resources and engineering time. This translates to lower cloud bills and faster incident resolution. Furthermore, a deeper understanding of agentic failures allows you to make more informed decisions about model selection and fine-tuning, optimizing your automation investments.

Originally reported by

Hugging Face BlogWhat did you think?

Stay Updated

Get the latest tech news delivered to your reader.