Refactor Your Training Pipelines with DeepSpeed’s PyTorch-Identical Backward API

Slash your training overhead by 30% and peak memory by 40% using DeepSpeed’s new PyTorch-identical API and low-precision BF16/FP16 support.

Editorial Note

Reviewed and analysis by ScoRpii Tech Editorial Team.

In this article

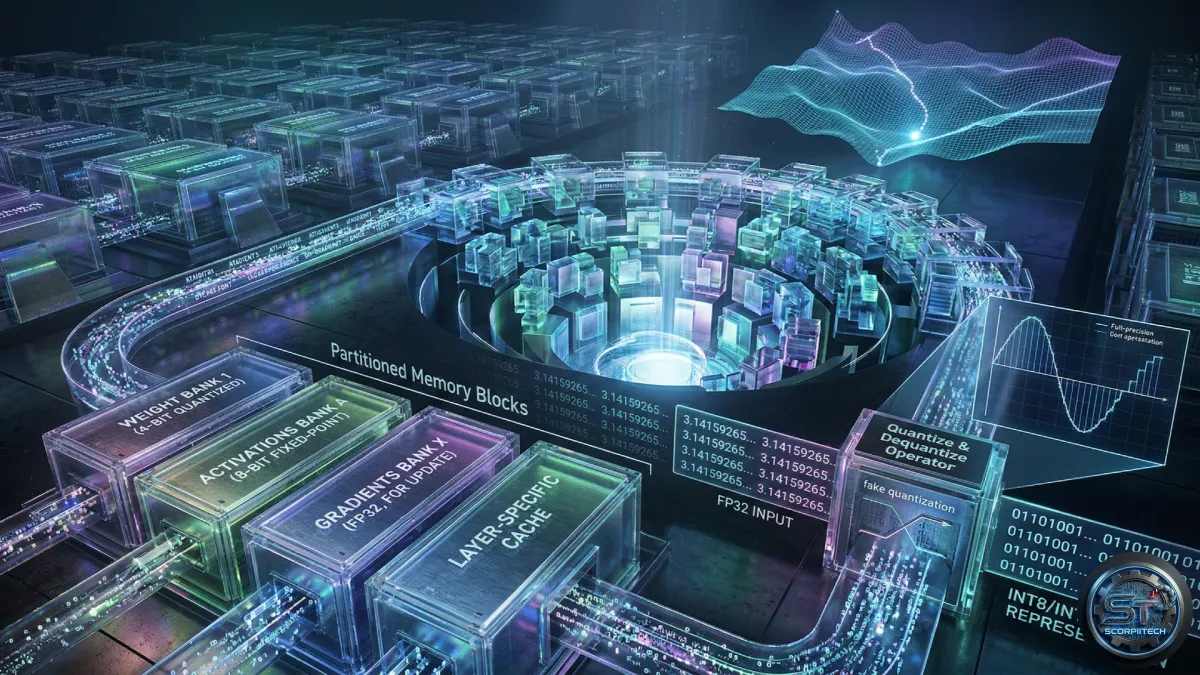

Scaling Model Training with ZeRO

You can now train significantly larger models with existing infrastructure thanks to advancements in memory optimization techniques. The Zero Redundancy Optimizer (ZeRO), integrated within the DeepSpeed framework, partitions model states – parameters, gradients, and optimizer states – across distributed GPU clusters. This eliminates the need to replicate the entire model on each device, a common bottleneck when working with models containing billions of parameters. ZeRO-1 initially focused on optimizer state partitioning, while ZeRO-2 added gradient partitioning, and ZeRO-3 extends this to the model parameters themselves.

By intelligently offloading states to CPU memory or NVMe storage when they aren’t actively in use, ZeRO further expands your hardware capacity. While offloading introduces communication overhead and potential latency, it allows you to overcome VRAM limitations that previously restricted model size. This is particularly crucial as you move towards increasingly complex models and larger datasets.

PyTorch-Compatible Backwards Pass Simplifies Development

Historically, DeepSpeed’s engine presented challenges for developers due to inconsistencies in its backward API. These architectural hurdles made it difficult to implement standard PyTorch patterns and often required substantial boilerplate code. The PyTorch Blog reported that recent updates have brought model development with DeepSpeed much closer to a “vanilla PyTorch” experience.

This change is especially impactful when managing multimodal models, such as those combining vision encoders and Large Language Models (LLMs). Legacy DeepSpeed implementations frequently encountered bugs with non-scalar gradients, requiring workarounds. The alignment with standard PyTorch behavior now allows for more fluid integration of DeepSpeed-Ulysses and Ray Actor groups. A reported 30% speedup in multimodal training is a direct result of more efficient gradient handling and the elimination of these shimming requirements.

Maximize VRAM Efficiency with Low-Precision Training

Your ability to train large models is often constrained by mixed-precision requirements. DeepSpeed traditionally maintained memory-intensive FP32 master states to ensure training stability. However, many workloads, including Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL), demonstrate reliable convergence without these high-precision states.

DeepSpeed now offers enhanced memory efficiency through low-precision model training using BF16 and FP16 formats exclusively. This approach was tested on a 7B parameter model using the Wikitext-103 dataset across 4 GPUs, achieving a 40% peak memory reduction over 1,000 training steps. You can leverage this by utilizing `torch.autocast` in conjunction with ZeRO3, eliminating the FP32 master weight overhead. This allows you to increase batch sizes or run larger models on your existing GPU fleet.

What This Means For You

You should immediately evaluate your multimodal training pipelines to determine if the PyTorch-identical backward API can streamline your codebase. If you are facing VRAM limitations, adopting the new low-precision BF16/FP16 training path could reduce peak memory usage by up to 40%. This could enable you to shift workloads from expensive H100 instances to more cost-effective hardware without compromising convergence speed. Consider the following benefits:

- Reduced Development Time: Simplified API integration with PyTorch.

- Lower Infrastructure Costs: Potential to utilize less expensive GPU hardware.

- Increased Throughput: Larger batch sizes and faster training cycles.

The Bottom Line for Developers

These updates to DeepSpeed and ZeRO represent a significant step towards democratizing large model training. By prioritizing developer experience and hardware efficiency, you can focus on model performance and innovation rather than battling framework-level complexities. The combination of a PyTorch-compatible API and low-precision training unlocks new possibilities for scaling your AI initiatives, allowing you to tackle more ambitious projects with your existing resources.

Originally reported by

PyTorch BlogWhat did you think?

Stay Updated

Get the latest tech news delivered to your reader.