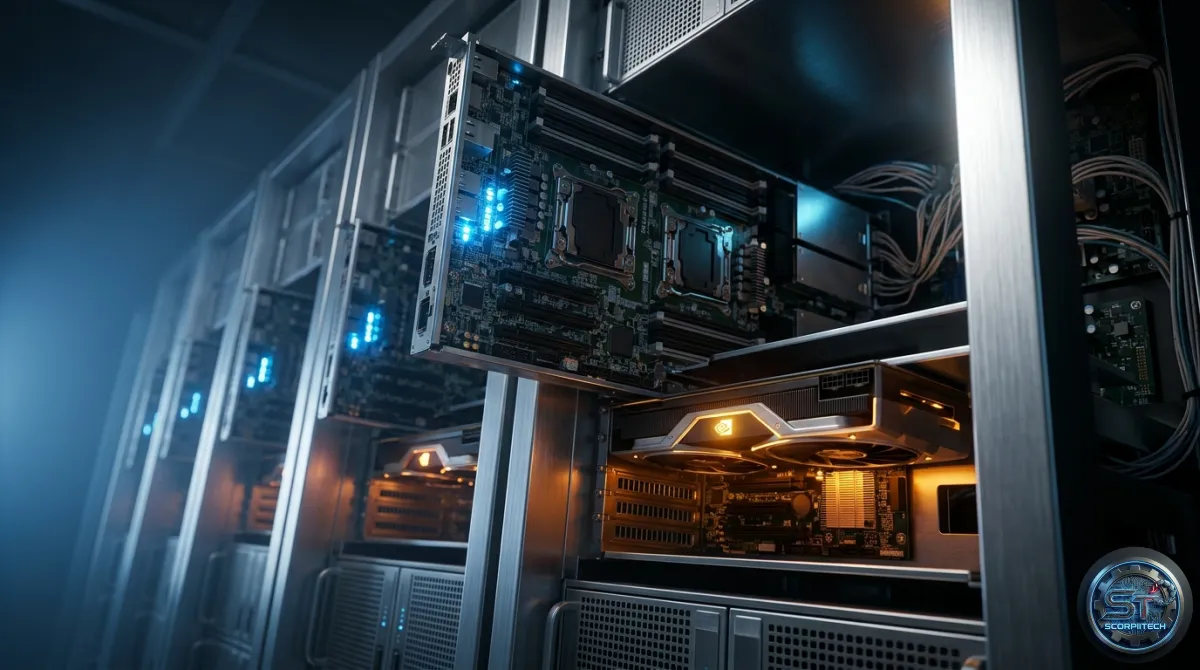

Your LLM Serving Bottleneck: Why Disaggregating CPU from GPU is Critical

If you're operating LLM inference, you're likely bottlenecked. Discover how Shepherd Model Gateway's...

5 articles found

If you're operating LLM inference, you're likely bottlenecked. Discover how Shepherd Model Gateway's...

Anthropic accidentally took down thousands of GitHub repositories trying to remove its own leaked Cl...

Discover how new Mac apps like Antinote, Substage, Pipit, and Beeper can transform your productivity...

TorchSpec introduces fully disaggregated inference and training for speculative decoding, enabling y...

Tired of fragmented Speculative Decoding benchmarks? SPEED-Bench offers a unified, diverse evaluatio...