Your Next Move in Healthcare Robotics: Dissecting Foundational Physical AI

Examine the technical implications of the Open-H-Embodiment dataset and GR00T-H physical AI models. Understand what this means for your healthcare robotics infrastructure.

Editorial Note

Reviewed and analysis by ScoRpii Tech Editorial Team.

In this article

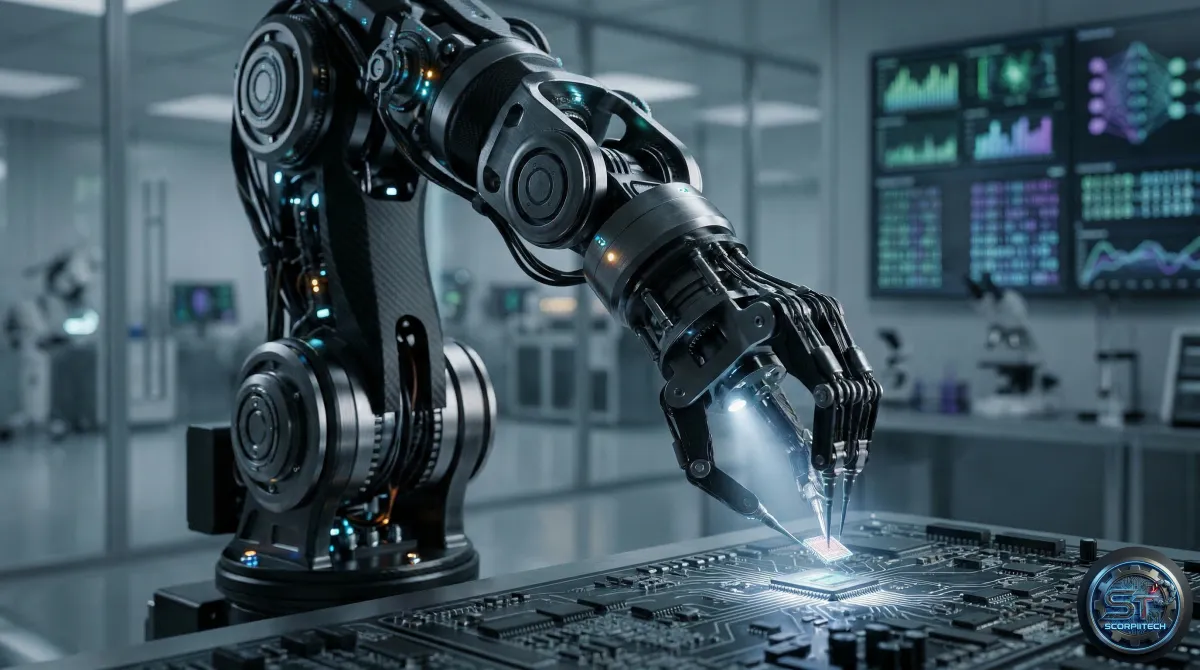

Shifting Paradigms in Healthcare Robotics

Your existing healthcare AI systems likely focus on perception, interpreting signals to aid diagnosis or surgical planning. However, the recent release of the Open-H-Embodiment dataset and GR00T-H foundational models marks a significant shift towards physical AI, enabling robots to learn complex tasks and interact with the physical world.

This initiative addresses the void in physical AI for healthcare robotics, providing a dataset and models engineered for high-precision movements and intricate procedures. Your teams can now develop systems that execute complex tasks, thanks to the Open-H-Embodiment dataset and GR00T-H model.

Foundational Architecture of GR00T-H

The GR00T-H model incorporates four design considerations: high precision, specialized hardware, imitation learning, and long-horizon dexterity. These considerations tackle the unique demands of healthcare robotics, such as handling cable-driven systems and managing multi-step surgical procedures.

Imitation Learning (IL) is a machine learning paradigm where an agent learns by observing demonstrations from an expert. In robotics, IL involves mapping sensor inputs to actuator commands based on recorded human demonstrations. Applying IL to complex tasks like surgery introduces challenges, such as replicating subtle human dexterity and ensuring safety in precise movements.

Technical Specifications

The Open-H-Embodiment dataset comprises 32 datasets across 9 robot embodiments. The GR00T-H model was fine-tuned using this dataset, requiring approximately 10,000 GPU-hours on 64 NVIDIA A100 GPUs. The model leverages a unified 44-dimensional action space, designed to generalize across diverse robot platforms.

- 32 datasets across 9 robot embodiments

- 10,000 GPU-hours on 64 NVIDIA A100 GPUs

- Unified 44-dimensional action space

Coalition and Partnerships

The development of Open-H-Embodiment and GR00T-H involved a broad coalition of academic and industry partners, including Johns Hopkins University, Technical University of Munich, NVIDIA, and others. This collaboration underscores the complexity and scope of the project.

What This Means For Your Operations

The release of Open-H-Embodiment and GR00T-H signals a maturation of the healthcare robotics field. You now have a foundational dataset and pre-trained models that can expedite the development cycle for physical AI applications. Understanding and integrating these models will be crucial for developing surgical robots or high-dexterity medical devices.

Your infrastructure planning should account for robust sensing, high-fidelity actuation, and low-latency control systems. Imitation learning's role implies a need for high-quality human demonstration data, requiring dedicated capture and annotation pipelines.

The Bottom Line for Developers

In conclusion, the Open-H-Embodiment dataset and GR00T-H model offer a significant opportunity for advancing healthcare robotics. By leveraging these resources, you can develop more sophisticated and effective physical AI systems. As you integrate these models into your development cycle, consider the computational demands, infrastructure requirements, and potential applications for your organization.

Originally reported by

Hugging Face BlogWhat did you think?

Stay Updated

Get the latest tech news delivered to your reader.