Gemma 4 Arrives: What It Means For Your GPU Clusters and Edge Deployments

Google released Gemma 4 open models, including MoE variants. Understand the direct implications for your mobile, IoT, and data center inference strategies now.

Editorial Note

Reviewed and analysis by ScoRpii Tech Editorial Team.

In this article

Architectural Breakdown: Practical Implications for Your Stack

The Gemma 4 release provides a tiered approach to open model deployment, directly addressing your needs for edge computing applications where latency and bandwidth are critical constraints. You now have the option of two smaller, more resource-efficient models: the Effective 2B (E2B) and Effective 4B (E4B) variants, engineered to run on mobile and IoT devices.

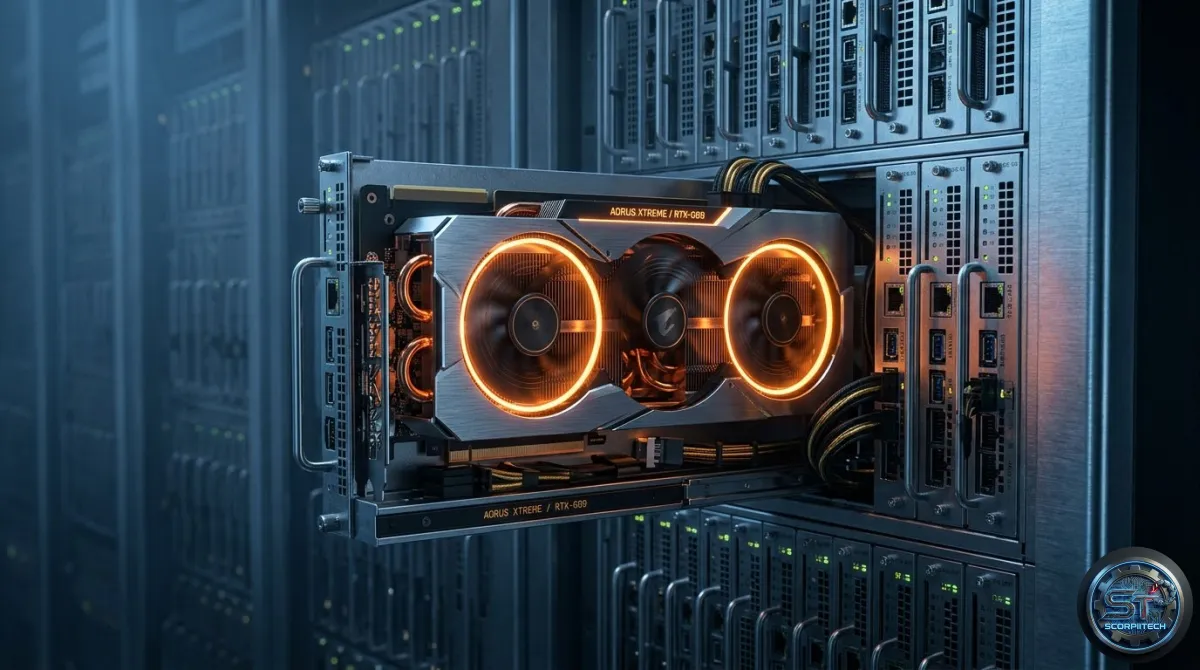

For more demanding tasks, the Gemma 4 suite includes a 26B Mixture of Experts (MoE) model and a 31B Dense model, both specified to run on a single 80GB NVIDIA H100 GPU. This detail is significant for your data center operations, as it indicates a specific hardware target and potential for consolidating larger model inference onto fewer, albeit powerful, accelerators.

Concept Refresher: Mixture of Experts (MoE)

A Mixture of Experts (MoE) architecture shifts how large language models process information, routing inputs to a sparse subset of specialized 'expert' subnetworks. This mechanism allows MoE models to have a vastly larger total number of parameters than a dense model of comparable computational cost during inference. For you, this translates to models that can capture more knowledge and perform more complex tasks while maintaining a manageable inference latency and memory footprint.

Ecosystem Integration and Your Deployment Strategy

The introduction of Gemma 4 is not an isolated event; its efficacy hinges on broader ecosystem support. Collaborations and integrations with entities like NVIDIA, Qualcomm Technologies, and MediaTek are essential for the real-world utility of these models. For your mobile and IoT initiatives, the involvement of Qualcomm Technologies and MediaTek means a higher probability of optimized performance on their respective chipsets.

Understanding that the 26B and 31B models target a single 80GB NVIDIA H100 GPU gives you a clear hardware benchmark. If your current infrastructure uses these GPUs, you can assess the immediate deployability of these larger Gemma 4 variants. This specific hardware requirement shapes your procurement decisions and capacity planning, allowing you to project the total cost of ownership for running these models at scale.

Key Features and Specifications

The Gemma 4 suite includes the following key features and specifications:

- Effective 2B (E2B) and Effective 4B (E4B) models for edge devices

- 26B Mixture of Experts (MoE) model for more demanding tasks

- 31B Dense model for high-performance inference

- Support for single 80GB NVIDIA H100 GPU

What This Means For Your Deployments

You are now presented with a more diverse set of open-source models for your generative AI initiatives. The E2B and E4B models offer a tangible path for deploying intelligent agents directly on edge devices, reducing cloud egress costs and improving local responsiveness. Your teams working on mobile applications or embedded systems now have a robust, open foundation to build upon.

The Bottom Line for Developers

The Gemma 4 release provides a significant opportunity for you to optimize your AI stack and improve the performance of your applications. By understanding the key features and specifications of the Gemma 4 suite, you can make informed decisions about your deployment strategy and infrastructure planning. With the right hardware and ecosystem support, you can unlock the full potential of these open-source models and drive innovation in your organization.

Originally reported by

Google DeepMind LibraryWhat did you think?

Stay Updated

Get the latest tech news delivered to your reader.