Gemini Robotics-ER 1.6: How Enhanced Embodied Reasoning Transforms Your Robotic Deployments

Gemini Robotics-ER 1.6 introduces enhanced embodied reasoning. Learn how this shift from instruction following to real-world reasoning impacts your robotics. Get the technical breakdown now.

Editorial Note

Reviewed and analysis by ScoRpii Tech Editorial Team.

In this article

Understanding Embodied Reasoning

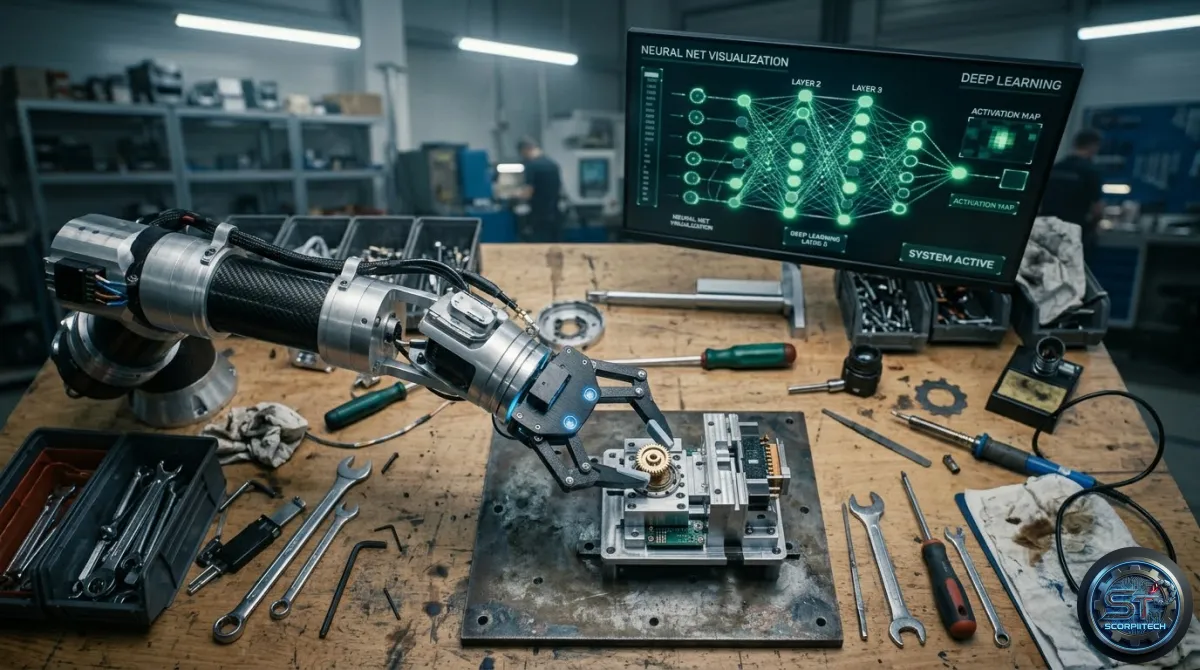

Your robotic applications now have the capability to reason, plan, and act within a physical environment, using their body and sensory inputs, thanks to embodied reasoning. This mechanism involves continuously processing sensor data to build a dynamic model of the surroundings, predicting the effects of actions, and formulating plans that account for real-world physics. You can move from executing pre-programmed sequences to making context-aware decisions that adapt to novel situations and physical constraints.

Embodied reasoning directly integrates perception, action, and physical interaction, unlike abstract reasoning, which operates on symbolic representations. For a robotic system, this means developing a richer understanding of its operational environment, moving beyond simple object recognition to a more profound comprehension of spatial relationships, physical affordances, and task requirements.

The Gemini Robotics-ER 1.6 Mechanism and Capabilities

The Gemini Robotics-ER 1.6 system leverages foundational models to facilitate this enhanced reasoning, integrating the Gemini API to access sophisticated AI capabilities. You can tap into the underlying intelligence of models such as Gemini 3.0 Flash, optimized for efficiency and rapid response times. The core mechanism involves processing diverse sensor inputs from robotic platforms, using advanced models to interpret complex scenes, infer object properties, and predict interaction outcomes.

The Gemini Robotics-ER 1.6 system has the following key features:

- Enhanced spatial reasoning and multi-view understanding for autonomous robotics

- Integration with the Gemini API for access to sophisticated AI capabilities

- Optimized models for efficiency and rapid response times

What This Means For Your Operations

The introduction of Gemini Robotics-ER 1.6 directly translates to a shift in how you conceptualize and implement robotic solutions. You are no longer solely focused on exhaustive instruction sets for every conceivable scenario. Instead, your focus can pivot towards enabling robots to autonomously adapt to the variability inherent in real-world tasks. This means reducing the overhead of manual programming for edge cases and improving the robustness of deployments in dynamic, unstructured environments.

The enhanced embodied reasoning capabilities aim to empower your robots to handle unexpected obstacles, manipulate unfamiliar objects, and perform complex tasks with a greater degree of independence. This can lead to more flexible and efficient automation workflows in your industrial or daily life applications. You can expect to see improvements in areas such as:

- Increased autonomy in robotic tasks

- Improved adaptability to dynamic environments

- Enhanced efficiency in automation workflows

The Bottom Line for Developers

The Gemini Robotics-ER 1.6 system offers a significant advancement in embodied reasoning for robotic applications. You can leverage this technology to create more autonomous, efficient, and adaptable robotic systems. By integrating the Gemini API and optimizing models for efficiency and rapid response times, you can enable your robots to make context-aware decisions and adapt to novel situations and physical constraints.

Originally reported by

Google DeepMind LibraryWhat did you think?

Stay Updated

Get the latest tech news delivered to your reader.