Your Defensive Cyber: Why Small, Specialized Models Beat Scale

CyberSecQwen-4B demonstrates how a 4B model can outperform an 8B specialist for specific defensive cyber tasks, runnable locally on your hardware. Learn what this means for your infrastructure.

Editorial Note

Reviewed and analysis by ScoRpii Tech Editorial Team.

In this article

Shifting Focus to Domain-Specific Efficiency

Your approach to frontier models needs a drastic change. Instead of solely focusing on scale, you should prioritize model fitness for specific operational needs, especially in defensive cyber applications. The CyberSecQwen-4B model is a prime example of this shift, delivering superior or equivalent performance to larger models for narrow, well-evaluated cyber threat intelligence tasks.

This includes critical operations such as Common Weakness Enumeration (CWE) classification, Common Vulnerabilities and Exposures (CVE) to CWE mapping, and structured CTI question-answering. By using a carefully fine-tuned 4B model, you can achieve high accuracy without the immense computational overhead typically associated with larger models.

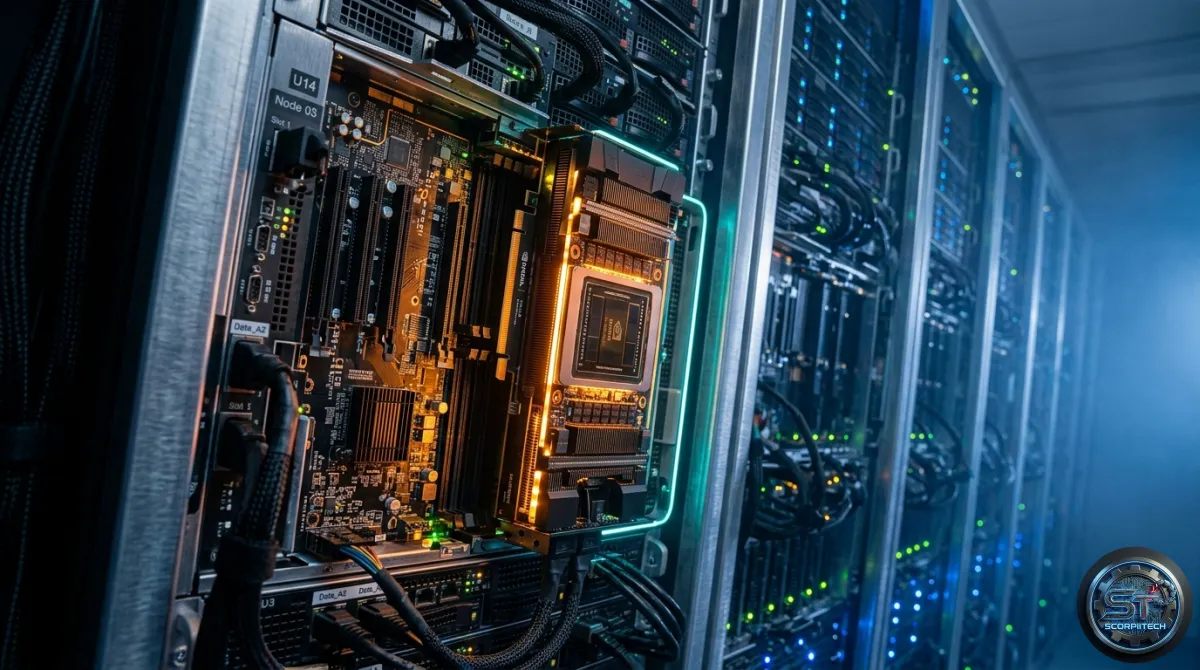

Training Environment and Hardware Advantage

The development of CyberSecQwen-4B's capabilities required a robust and efficient training environment. The entire pipeline, encompassing training, adapter merging, and evaluation, was executed end-to-end on a single AMD Instinct MI300X 192 GB instance, facilitated by the AMD Developer Cloud. This infrastructure allowed for unparalleled simplicity in the workflow.

The combination of 192 GB of HBM3 memory and ROCm 7's vLLM stack removed the necessity for common memory-saving techniques often critical for larger models. Specifically, you did not need to employ quantization tricks, gradient checkpointing, or split the model across multiple devices. The pipeline operated with full bf16 precision, leveraging FlashAttention-2 for both forward and backward passes, using a batch size of 4, and a sequence length of 4096—all contained within a single GPU.

Key Concepts and Techniques

Quantization is a technique used to reduce the memory footprint and computational requirements of neural networks, including LLMs. It involves representing weights and activations with fewer bits, often at the cost of minor performance degradation. Gradient checkpointing is another memory optimization technique used during the training of deep neural networks, which reduces memory usage by only storing a subset of intermediate activations.

The CyberSecQwen-4B project avoided these compromises due to ample GPU memory, streamlining the training process. Here are some key features and benefits of this approach:

- High accuracy without immense computational overhead

- Simplified workflow and reduced memory usage

- Ability to deploy highly specialized LLMs on consumer-grade hardware

What This Means For You: On-Prem Defensive Cyber Capabilities

The implications of CyberSecQwen-4B's success are direct and significant for your defensive cyber operations. You can now realistically consider deploying highly specialized LLMs directly within your own infrastructure, even on consumer-grade hardware equipped with at least 12 GB of VRAM.

This capability offers several critical advantages, including reduced reliance on external cloud-based API services, enhanced data privacy and control over sensitive CTI, and lower network latency. Your teams can iterate faster on model improvements, fine-tuning models like CyberSecQwen-4B to address evolving threats without incurring massive cloud costs or dealing with complex distributed training setups.

The Bottom Line for Developers

The shift to domain-specific efficiency and the success of CyberSecQwen-4B have significant implications for developers and defensive cyber operations. By prioritizing model fitness for specific operational needs and leveraging efficient training environments, you can achieve high accuracy and deploy highly specialized LLMs on consumer-grade hardware. This approach enables you to reduce reliance on external cloud-based services, enhance data privacy and control, and improve response times in critical intelligence processing.

Originally reported by

Hugging Face BlogWhat did you think?

Stay Updated

Get the latest tech news delivered to your reader.