Stop Answering the Same Question Twice: Interval-Aware Caching for Your Druid Deployments

Learn how Netflix solved repetitive query loads on Apache Druid with interval-aware caching, improving performance for your large-scale analytics.

Editorial Note

Reviewed and analysis by ScoRpii Tech Editorial Team.

In this article

The Challenge of Repetitive Queries at Scale

When operating at extreme data volumes, the efficiency of query execution becomes crucial. You likely encounter scenarios where your analytics platform struggles with identical or near-identical queries executed repeatedly. For instance, Netflix processes 10 trillion rows and 15 million events per second, making query optimization essential.

Netflix's experience with Apache Druid highlights the importance of addressing repetitive query loads. Druid is an open-source, distributed data store designed for real-time analytics on large datasets, excelling at online analytical processing (OLAP) queries. However, its strength in ingesting data at high rates and serving low-latency queries can be overshadowed by the redundant work performed when handling repetitive queries.

Concept Refresher: Apache Druid

Druid's architecture consists of ingestion nodes, historical nodes for immutable data, and broker nodes that route and merge query results. Its ability to provide fast aggregations and complex filtering over high-cardinality data makes it suitable for operational analytics, business intelligence, and user behavior analysis. When integrated into your data stack, Druid offers a powerful platform for interactive analytics on data streams and historical archives.

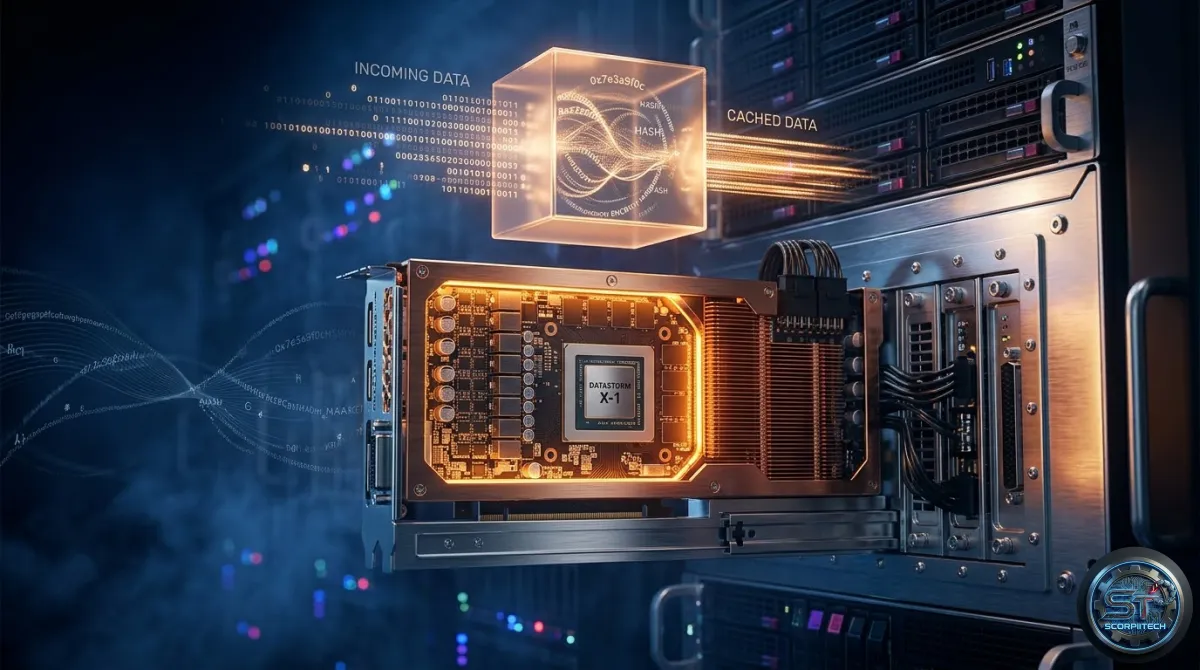

The Interval-Aware Caching Mechanism

To mitigate the impact of query repetition, Netflix implemented an interval-aware caching layer within their Druid Router. This solution intercepts queries and serves cached results when available, preventing redundant computation. The mechanism relies on generating a unique cache key for each query using an SHA-256 hash, ensuring that even minor differences in query parameters result in distinct cache keys.

The caching strategy involves two key aspects for cache entry invalidation: a relatively short 5-second cache TTL and exponential TTLs for specific use cases. This combination ensures that while repetitive queries are handled efficiently, the cache doesn't serve stale data for extended periods.

What This Means For You

If you're operating large-scale analytics platforms, especially with Apache Druid, Netflix's approach offers a clear architectural blueprint. Implementing an interval-aware caching layer in front of your Druid brokers can drastically reduce the load on your underlying data stores. By utilizing a robust hashing algorithm like SHA-256 for cache key generation and intelligent TTL strategies, you can:

- Significantly decrease the number of redundant queries hitting your Druid cluster, preserving compute and I/O resources.

- Improve query latency for frequently accessed data, offering a better experience for your users and applications.

- Increase the overall throughput and resilience of your analytics infrastructure, allowing it to scale more effectively without constant hardware provisioning.

The lesson here is not just about caching but about intelligent resource utilization. By making your systems smarter about the questions they answer, you can extract more value from your existing infrastructure and defer costly scaling initiatives.

The Bottom Line for Developers

When designing and optimizing your analytics infrastructure, consider the impact of repetitive queries on your system's performance. By implementing an interval-aware caching layer, you can significantly improve query efficiency, reduce latency, and increase overall system throughput. This approach can be particularly beneficial for large-scale analytics platforms, allowing you to scale more effectively and make the most of your existing infrastructure.

Originally reported by

Netflix Tech Blog (ML)What did you think?

Stay Updated

Get the latest tech news delivered to your reader.